Shifting the retinal foundation models paradigmfrom slices to volumes for optical coherencetomography

- Joachim Behar

- Mar 18

- 3 min read

By Raphael Judkiewicz, Eran Berkowitz, Meishar Meisel, Tomer Michaeli & Joachim A. Behar

We’re excited to share our new publication, “Shifting the retinal foundation models paradigm from slices to volumes for optical coherence tomography,” now available in npj Digital Medicine. URL: https://www.nature.com/articles/s41746-026-02496-7

Optical coherence tomography, or OCT, is one of the most important imaging tools in ophthalmology. It gives clinicians a detailed cross-sectional view of the retina and plays a central role in diagnosing diseases like age-related macular degeneration and glaucoma. But despite OCT being inherently three-dimensional, most AI foundation models for OCT still rely on a single 2D slice, usually the middle B-scan. Our new work asks a simple question: what are we missing when we ignore the rest of the volume?

Why this matters

In real clinical practice, ophthalmologists do not diagnose retinal disease from one slice alone. They scroll through the full OCT volume, looking for patterns that extend across slices and subtle findings that may not appear in the center scan. Existing OCT foundation models have made major progress, but their single-slice design leaves a lot of potentially useful information on the table. Our study shows that moving from 2D slices to full volumetric modeling can make a real difference.

What we did

We evaluated whether a video foundation model, V-JEPA, could be repurposed to analyze OCT volumes as sequences of slices. In this setup, the OCT scan is treated like a short video: instead of frames over time, the model sees slices across retinal depth. We then compared this volumetric approach against leading image-based foundation models, including RETFound, VisionFM, and DINOv2.

To test robustness, we fine-tuned and evaluated the models across five independent OCT datasets from four geographic regions, covering both age-related macular degeneration (AMD) and glaucomatous optic neuropathy (GON). These datasets included scans from the US, China, Iran, and Israel.

Overview of the research. (a) Geographic distribution of the datasets used in the

experiments. (b) Comparison between video and image foundation models for OCT analysis.

(c) Key results: Left: Performance benchmark of video, general image, and retinal image

foundation models. Middle: Model performance as a function of training set size. Right:

Visualization of attention maps from the video foundation model across the full OCT volume.

BioRender.com was used to produce this figure.

What we found

The results were clear: V-JEPA consistently matched or outperformed image-based foundation models. Across all datasets, it achieved an average AUROC of 0.94, compared with 0.90 for the best-performing image model, a statistically significant improvement.

The biggest gain appeared on the CirrusOCT glaucoma dataset, where V-JEPA reached an AUROC of 0.94, compared with 0.88 for the next-best model. On more challenging data, the volumetric model still outperformed all single-slice approaches.

We also found that the benefits of volumetric modeling extended beyond headline performance:

V-JEPA continued to improve as training data increased, while image-based models tended to plateau earlier.

It outperformed a 3D CNN trained from scratch across all evaluated datasets.

It also beat a 2.5D adaptation of DINOv2 on four of five datasets.

Why volumes help

The reason seems intuitive: retinal disease is often not confined to a single slice. Some abnormalities are sparse, some are distributed across neighboring slices, and some only become interpretable when seen in context.

Our attention-map analyses support this idea. V-JEPA tracks relevant anatomical features across multiple B-scans, while a single-image model focuses on a much narrower view. Even when comparing the same middle slice, volumetric context appears to help the model attend more precisely to clinically relevant structures.

We also found that in many cases where the single-slice model failed but V-JEPA succeeded, pathology was either outside the middle slice or better understood when surrounding slices were available. In other words, the volume contains signal that a central snapshot can miss.

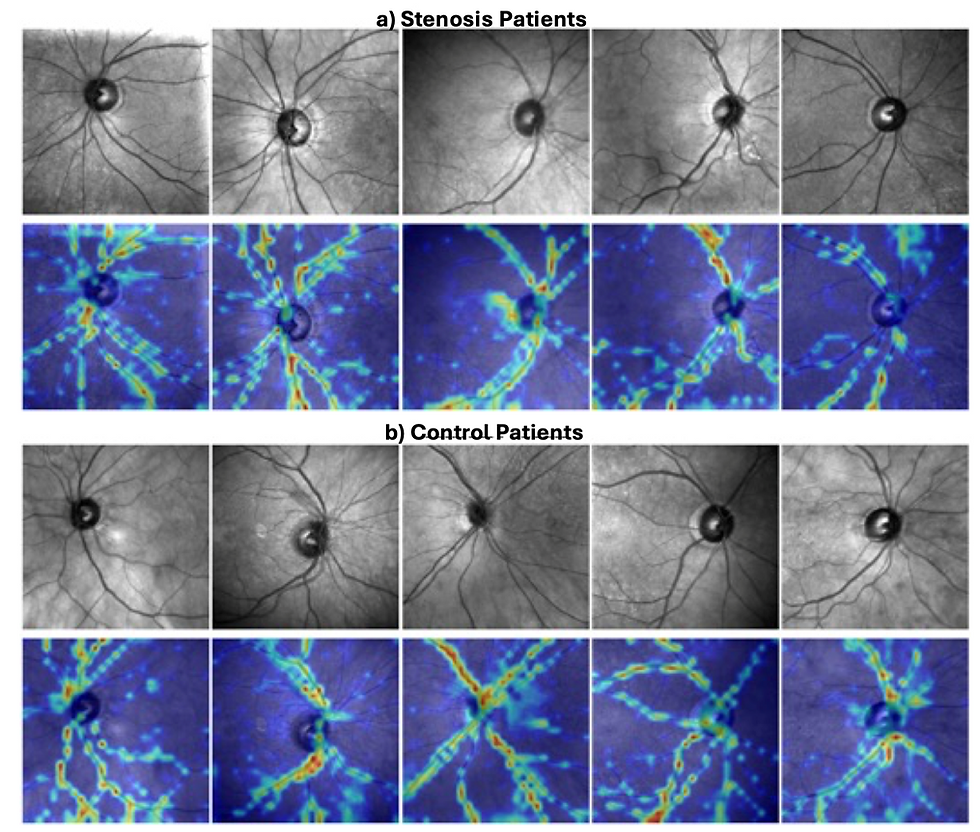

Explainability. (a) Attention maps from V-JEPA across multiple B-scans of an AMD

OCT, illustrating how the model dynamically tracks relevant anatomical features over the full

volume of the retina. (b) Comparison of attention of V-JEPA and DINOv2 on the same middle

slice (Slice 8) shows that V-JEPA focuses more precisely on clinically relevant structures

compared to a single-frame image-based model, highlighting the benefit of spatial context in

volumetric interpretation.

A broader shift for OCT AI

This work points to something larger than a single model comparison. It suggests that OCT foundation models may need a paradigm shift: from training on isolated slices to learning directly from full volumes. That shift would bring model design closer to how clinicians actually examine OCT data and could help unlock more clinically useful AI systems.

At the same time, our findings highlight an ecosystem challenge: open volumetric OCT datasets remain limited, while many public resources still provide only single-slice images. If the field is going to fully embrace 3D retinal AI, data sharing practices may need to evolve too.

Comments